The test is actually testing both of them, to ensure data isn't corrupted after being compressed and then decompressed. On the left, we have an implementation of the LZW compression algorithm, as separate compressor and decompressor libraries. Drawing this (ignoring the source files and other dependencies), we find this kind of structure: We have two binaries, each depending on its own library, and also on a third, shared library.

For this reason, it's important to have a blocklisting system so that exceptions can be marked, and we can avoid bothering people with spurious changelists.Ĭonsider a simple case. There are many other exceptions too, where removing the code would be deleterious. There are, of course, exceptions: some program code is there simply to serve as an example of how to use an API some programs run only in places we can't get a log signal from. If a program hasn't been used for a long time, we try sending a deletion changelist. By aggregating this, we get a liveness signal for every binary used in Google.

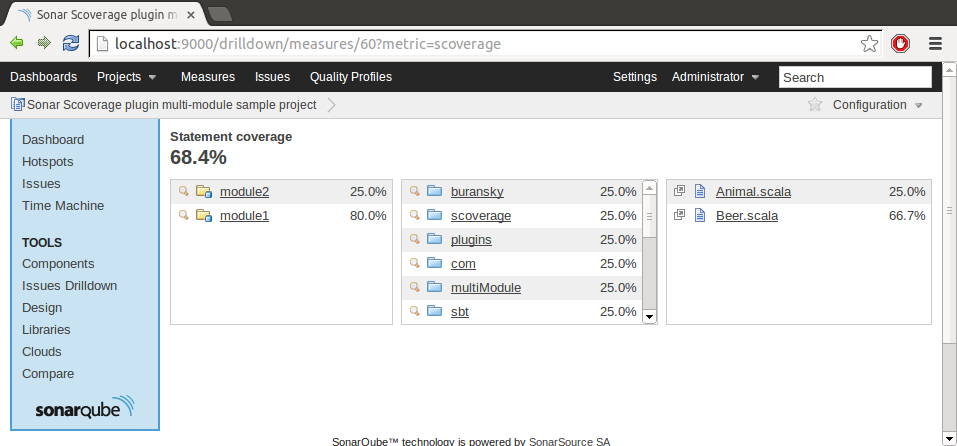

The only real way to know if programs are useful is to check whether they're being run, so for internal binaries (programs run in Google's data centres, or on employee workstations), a log entry is written when a program runs, recording the time and which specific binary it is. That's only a small part of the problem, though: what about all those binaries? All the one-shot data migration programs, and diagnostic tools for deprecated systems? If they don't get removed, all the libraries they depend on will be kept around too. This allows us to find libraries that are not linked into any binary, and propose their deletion. Google's build system, Blaze (the internal version of Bazel) helps us determine this: by representing dependencies between binary targets, libraries, tests, source files and more, in a consistent and accessible way, we're able to construct a dependency graph. Its goal is simple (at least, in principle): automatically identify dead code, and send code review requests ('changelists') to delete it. It submits over 1000 deletion changelists per week, and has so far deleted nearly 5% of all C++ at Google. The Sensenmann project, named after the German word for the embodiment of Death, has been highly successful. So what if we could clean up dead code automatically? That was exactly what people started thinking several years ago, during the Zürich Engineering Productivity team's annual hackathon. However, while this dead code sits around undeleted, it's still incurring a cost: the automated testing system doesn't know it should stop running dead tests people running large-scale cleanups aren't aware that there's no point migrating this code, as it is never run anyway. Sometimes they are cleaned up, but cleanups require time and effort, and it's not always easy to justify the investment.

Indeed, entire projects are created, function for a time, and then stop being useful. Such maintenance cannot easily be skipped, at least if one wants to avoid larger costs later on.īut what if there were less code to maintain? Are all those lines of code really necessary ?Īny large project accumulates dead code: there's always some module that is no longer needed, or a program that was used during early development but hasn't been run in years. It also allows kind-hearted individuals to perform important updates across the whole repository, be that migrating to newer APIs, or following language developments such as Python 3, or Go generics.Ĭode, however, doesn't come for free: it's expensive to produce, but also costs real engineering time to maintain. For example, if an engineer is unsure how to use a library, they can find examples just by searching. This repository, stored in a system called Piper, contains the source code of shared libraries, production services, experimental programs, diagnostic and debugging tools: basically anything that's code-related. At Google, tens of thousands of software engineers contribute to a multi-billion-line mono-repository.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed